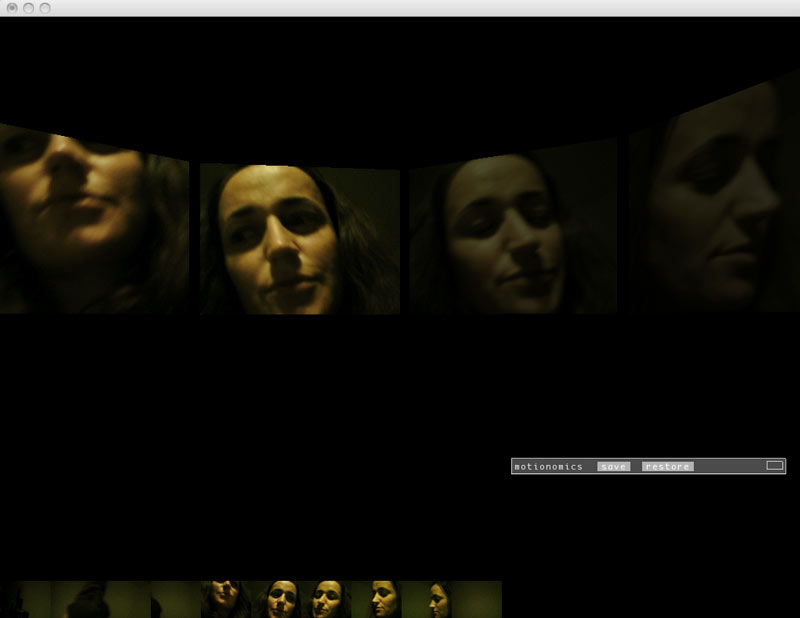

Point and Shout is a multimodal interaction sketch exploring expressive Voice Recognition together with Hand and Finger tracking as a base for playful interactions.

It takes advantage of voice recognition in uncommon ways by using onomatopoeias – words that imitate the sounds associated with the objects or actions they refer to-

for a more expressive vocabulary and richer interaction.

Made at the NYC Perceptual Hackathon using:

Intel’s Perceptual Computing SDK + OpenFrameworks

RSS feed for comments on this post. / TrackBack URI